Recall in a previous course, we have introduced the Machine Learning Process Lifecycle (MLPL), simply put there are four stages:

- Business Understanding and Problem Discovery

- Data Acquisition and Understanding

- ML Modeling and Evaluation

- Delivery and Acceptance

These phases are iterative, you can’t skip ahead. A good, clear problem definition is important.

Business Understanding and Problem Discovery (BUPD)

The BUPD stage is where we develop a specific understanding of the business processes, and where we try to concretely define the problem we’ll be working on. There’s only high-level focus on data in this stage. This stage is primarily about strategically aligning business process with the execution of the project.

Correctly understanding the business functions can save you a lot of time down the road. Taking your time in this stage is a good idea so that you don’t jump into a machine learning solution that builds on incorrect assumptions, and does not align with the business problems you care about. BUPD is broken up into several parts:

| Objectives | Identify business objectives that machine learning techniques can address. |

| Problem definition | The business needs to agree that solving this machine learning problem will be relevant to their original business problem. |

| Stakeholders & Communication | Identify internal and external stakeholders and their role. How everyone will communicate and how often. |

| Data sources | Highlight any data sources that will be used for the project. |

| Constraints | Many factors that will put constraints on what you are able to do. |

| Development environment | Define both the development environment and the collaborative environment that you’re going to use. |

| Existing practices | Identify what business processes or practices are already in place. Domain knowledge is vital to a successful machine learning project. It’s good to get an overview of the actual processes in place pre machine learning. |

| Milestones | What are the timelines for these milestones and what exactly will the deliverables look like. |

What concrete things will come out of BUPD stage? Possibly:

- a report describing all the procedures that are involved in the business process, as well as

- a report describing the machine learning version of the problem that’s going to be solved.

After all there’s a business problem and an equivalent machine learning solution. You need to outline what both of these are and then clearly define what success looks like. Remember, there are two problems here. So, you need to define success criteria for both the business and your machine learning model.

By the end of this phase you want to be able to answer, what is the machine learning solution for this business problem? The key to answering this question is communication.

No Free Lunch Theorem

Machine learning is all about performing a task (classification, regression, whatever). The idea is that with more experience, the machine will become better at that task with respect to a given evaluation criteria. Data is generated by some process. Those processes can be described by a probability distribution, whether we know what the distribution is or not. Most of the time, and definitely in all interesting cases, we don’t have an absolute reference for what the best probability distribution actually is.

However, if we have enough data coming from this probability distribution we can estimate it, and depending on the data quality, volume, how well behaved the underlying probability distribution truly is, we can use the data to train a model that can reasonably accurately predict new instances generated from the same distribution. The critical piece is that the machine learning prediction task we’re trying to solve, is dependent on the specific data generating distribution that underlies the tasks.

There is no universal learning algorithm which works better than any other, on all machine learning prediction tasks or application domains.

“No free lunch theorem”, David Wolpert, Computer Scientist

So the no free lunch theorem says that when averaged over all possible data generating distributions, any learning algorithm will have the same performance. Truly there are some learning algorithms perform better than others, the key is in the universal side.

- All distributions, include:

- Arbitrary, constant or useless distributions.

- Distributions we care, include:

- Some distributions where algorithm A is optimal

- Some distributions where algorithm B is optimal

According to the no free lunch theorem, there’s no universal solution that we can promise performs best, even on everything we care about, because we don’t really know what the underlying distributions are for everything we care about. A learning algorithm which works well in one task may not work well in another task, compared to a different learning algorithm. There is simply no universally best model or algorithm.

How to Train Models?

- First we have to try different learning algorithms to identify the best one for a given task.

- Second, for us to make sure the selected algorithm is the best for the task at hand, we need a large enough dataset to represent the data generating distribution of that task.

How Problem Definition is Shaped

- What you want to achieve?

- Decide on your high-level desired outcomes.

- What actions will be taken by whom?

- Use your best brainstorming practices to think creatively.

- What information will that action be based on?

- What kinds of questions improve the effectiveness?

- Think about the data that will be needed to be able to answer that question.

- Can you access that data?

- Is data enough to build model?

- Is data in form of steady stream for putting the model into operation?

If the answer’s YES, you’re in good shape to continue. If the answer’s NO, revisit your list of actions and questions and work through the data again until you find the right fit. The needing to do multiple iterations of this process is not only common, but often gives a much better foundation for your machine learning project.

Once you’ve sorted out all of these questions and you have the answers that are high level, you’re ready to get your hands dirty by entering the data acquisition and understanding phase.

Data Acquisition and Understanding (DAU)

The DAU phase is the time when you’re actually going to get into data and start investigating what you have. The DAU phase is where all of your prior assumptions are going to be tested. If we have done a bad job in the previous BUPD phase, it will now be revealed. What happens in this phase?

- Data acquisition – get data from your client.

- Multiple data sources

- Take time acquiring the data

- Understand the volume and how data transferred

- Protect and acknowledge the privacy

- Encryption standards

- Query

- Data cleaning – alignment, normalization and removal of bad values.

- Align multiple tables

- Filter out certain values, errors, etc.

- Get help from domain experts

- Data processing – convert data into an appropriate form for modeling.

- Label for supervised learning

- Data pipeline – refine our data regularly as part of the ongoing learning process.

- Identify steps and reusable code

- Exploratory data analysis – gain deeper understanding about the data.

- Confirm certain assumptions you or the business had

- It might contradict some of the beliefs

- Limit how much time you spend doing EDA

- Feature engineering – identify the features in the data

After refining your raw data, the volume of data will be smaller than the original raw dataset. Your cleaning and processing phases generally mean throwing away a lot of useless data. So you need to examine how your new refined data informs decisions that you made earlier in the BUPD phase and thus iteration begins.

Metadata

It turns out that the data alone without the context of how it was collected, stored, processed, and monitored isn’t nearly sufficient for responsible Machine Learning. We also need metadata. Metadata is simply data about data. This means a description and context for the data. It helps organize, find, and understand data.

The identification and curation of metadata is an important process. The most important thing to keep in mind when collecting data is:

- What additional contexts can I provide?

- How will it keep it with the data itself?

When you’re looking at different data sources, pay attention to the metadata as well as the raw material. Well curated metadata can be crucial for machine learning success.

Multi-modal Data

How to combine very different data types? Sometimes you get different data types like images, numbers, and binary signals, and it might be hard to combine different kinds of data in order to effectively use them as the learning data.

Part of the machine learning process is:

- considering what transformation steps are required

- knowing what your data actually means in your problem domain

It helps you know what transformation steps are most important.

Identify the Data You Need

The quality of the data you have is going to be a critical piece for answering the question “if machine learning is going to help?” It’s important to think about how your models going to be used, and find the correct population to collect data. Then is the time to start thinking about what features to include in our model, think about the question “what data will be available at the time of prediction?”

It’s important to consider what information you have available to you at the time your model will be used. Anything that would be collected after the time the model is going to be used should be omitted from your model. it’s also important when you’re selecting your data, to be sure the data is representative of the situation where you plan to use your model.

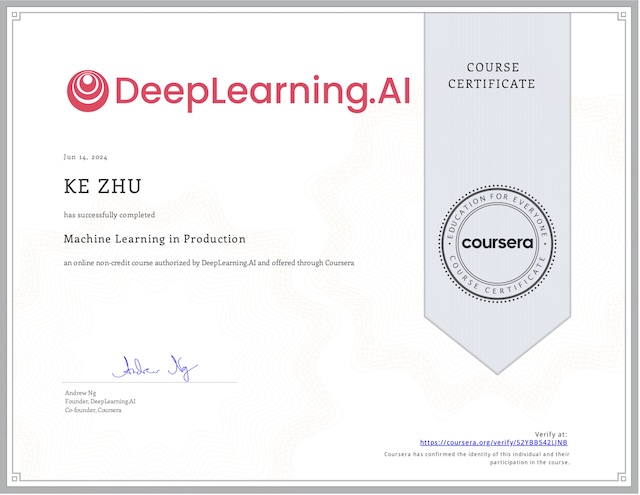

My Certificate

For more on Understanding Your Machine Learning Problems and Data, please refer to the wonderful course here https://www.coursera.org/learn/data-machine-learning

Related Quick Recap

I am Kesler Zhu, thank you for visiting my website. Check out more course reviews at https://KZHU.ai